I’ll preface this by saying, I use Claude for many tasks. It is fairly capable and the errors it makes are usually based around the complexity of the code I’m working on. I have had it assist while I build out an entire class-based aggregation project, and it dives into existing files and is able to determine how they work… Eventually.

Like any model, it starts to forget what happened earlier in the conversation and without explicit instructions it can get confused. Also, giving any model unrestricted direct access to your filesystem is a quick and easy way to find out what not to do and why you don’t work on production code and databases.

For this project, I was working with a GeekMagic SmallTV Ultra, and I wanted to create an app that could make this little device usable in a way that made sense. No hacking firmware, no complex changes, just a simple run-and-done app. I dreamed it up last night.

Anyways, going into this, I was starting from scratch. I only knew, based on another Github project I looked at, that you could upload images to the device after rendering them – it was written in python and my knowledge of Python syntax is elementary, at best – PHP for life! But I’m sure it had the basis of what I needed, and was a good place to start.

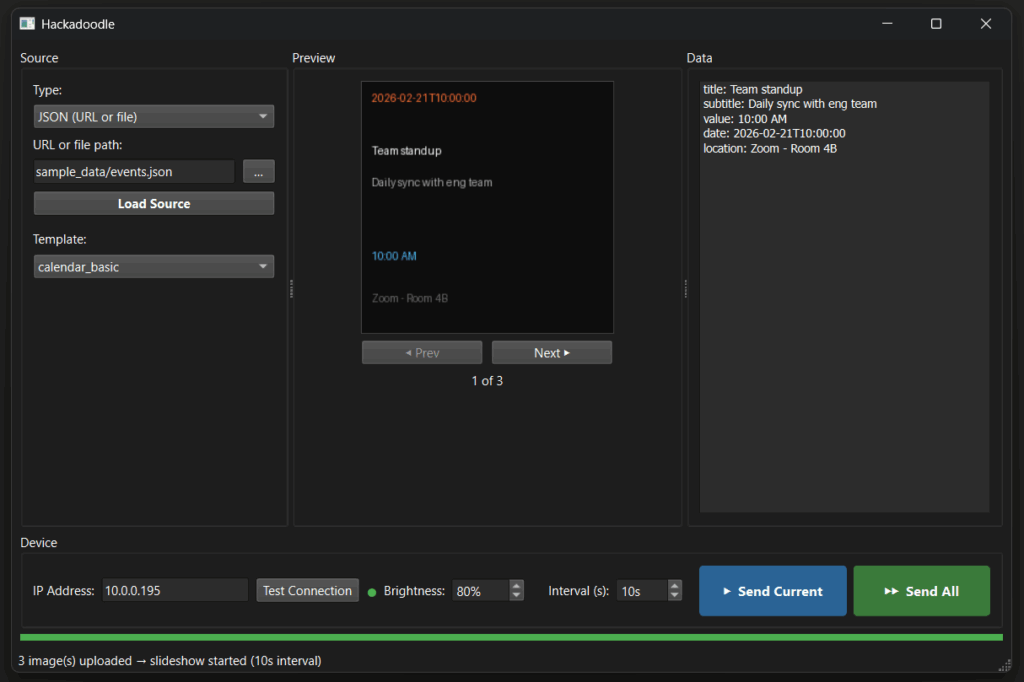

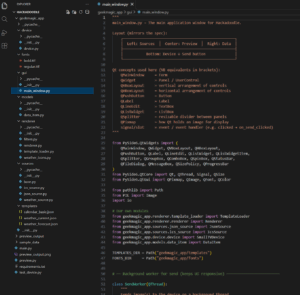

I started off by planning out the project; what I wanted to accomplish, whether it needed to be written in a specific order (I focus on function over form typically, getting individual functions and features working before merging them into the whole), and then dove into learning how to set up python and something called pyside6. I needed it to be able to parse multiple datasets, build images based on that data, handle different templates, and interface with the device directly. The next phase would be a complex parser to pick and choose the data that shows up in the images, but for now, this was a good place to start.

I set up the project in Claude, laid out the instructions and order of operations, and gave Claude desktop direct access to a blank folder (and only that folder).

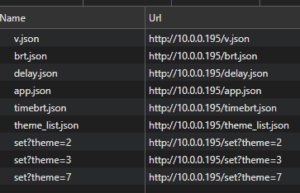

Claude started off by creating the folder structure, and reading some existing GitHub projects to determine the best way to build it out. The parsing and normalizing data is not a big deal but the device itself has limited information available. Yes, there is a full firmware, but it’s flashed directly onto the device in a single file. With some probing and looking at commands in the browser, we figured out the best way to set slideshow timing, upload images, deleting old images, etc. Nothing is particularly secure in the GeekMagic, but realistically, it’s not all that important for its use-case.

It built the GUI as well, quickly and easily, and at this point I didn’t really even need the qt designer, I had it open but didn’t even look at it. It was able to generate image tiles, and while we worked on fonts and sizes, it could read and interpret ICS and JSON files, and as a proof of concept it “just worked”.

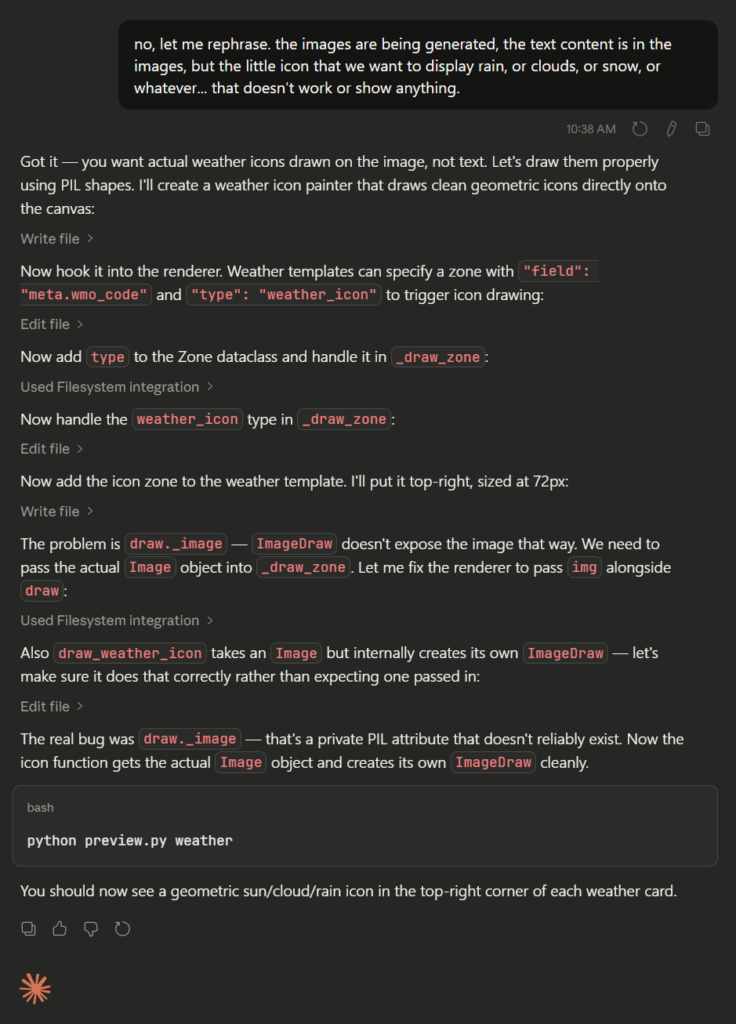

I started running into issues when I was trying to explain why a weather tile image wasn’t working, it was supposed to show a picture of a cloud, rain, or snow next to the temperature. I made the mistake of saying the thumbnail wasn’t working, and realized afterwards that it assumed I was having an issue opening the image in Windows. To be fair, Claude did ask me what I meant, and I clarified with direct wording and we got through that issue.

At this point I was thinking to myself, “that’s new”. Why is it not just telling me it solved the issue, and “everything is great now”!

Let me tell you how often that happens…. It is unbelievably frustrating when a model does this repeatedly. Claude is not the worst offender (ahem, ChatGPT), but when I hear things like, “ok, I changed this one thing and it’s working now!” 20 times in a conversation, and it isn’t actually working, I give up. Or, it develops functions around non-existent filters or actions in WordPress and eventually tells you it’s a “theoretical method” that would eventually be used to accomplish whatever it is that I’m trying to create.

So, I clarified, Claude solved the problem, and we move on.

Plot twist.

As I was waiting for it to compact the conversation, I looked down and saw something new. Sonnet 4.6? It dawned on me that I was using a new model. It came out a few days ago, and already I noticed the difference. Anthropic definitely has a winner, so far.

I’m posting the entire codebase to my GitHub; this was built using one entire session and running entirely out of free messages. One hour and 14 minutes. This project is not quite complete, but fairly polished in terms of functionality. I haven’t yet looked at any of the raw code, despite having it open in VScode, to understand the logic and structure behind it. I image this is how it feels when you build something like fake Netflix to mess with your friends! (I don’t know if Andrew can actually code or not, but my youngest son thought it was absolutely hilarious).

You know, I read articles like “Top engineers at Anthropic, OpenAI say AI now writes 100% of their code—with big implications for the future of software development jobs” and think to myself just how different things are compared to a few years ago. I no longer have to research, build out syntax and features, and debug – the focus for me now is on editing, refactoring, auditing, and integrating. I already know what I want to build, and how to build it. I’m fluent in the code itself and can iterate and find solutions to problems easily. I don’t have to do all of it anymore, though. If I can generate an in depth synopsis of the project, then break down the steps, and figure out any key issues that could come up ahead of time, AI tools can now generate a clean project in next to no time.